Stop Letting AI Ruin Your Attribution: How To Track Link Previews, Bots And Real Clicks Separately In 2026

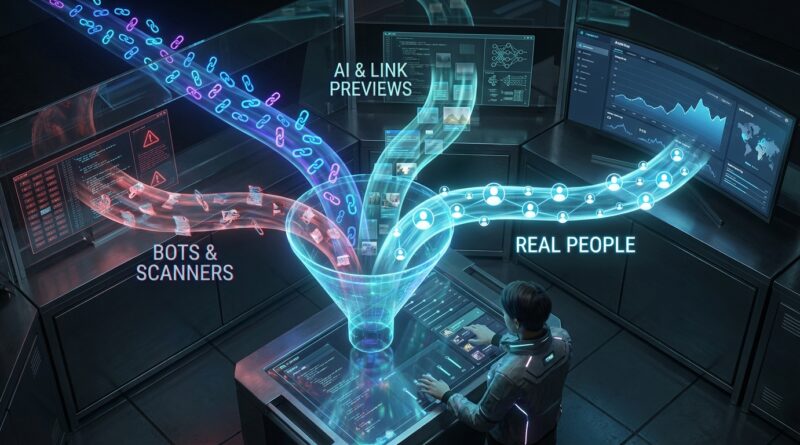

Your dashboard says 1,200 clicks. Google Analytics shows 640 sessions. You are not going mad, and your campaign is not necessarily broken. The problem is that a lot of those “clicks” were never real human visits in the first place. AI assistants fetch links to build previews. Messaging apps pre-load pages. Email security tools test every URL before a person even opens the message. Add old-fashioned bots and crawlers, and suddenly your short link stats look brilliant while your actual traffic looks flat. That gap is costing marketers real money, because budgets get shifted toward channels that only look good on paper. The fix is to stop treating every redirect hit as a click. In 2026, you need to track three separate things: raw link hits, filtered human clicks, and confirmed landing page visits. Once you split those numbers properly, attribution starts making sense again.

⚡ In a Hurry? Key Takeaways

- Do not count every short-link hit as a real click. Separate raw hits, suspected bots/previews, and confirmed human visits.

- Start filtering at the redirect layer using user agent checks, HEAD request detection, IP reputation, and short time-window rules.

- This protects your reporting and budget decisions, because Google Analytics sessions will almost never match an inflated shortener click count exactly.

Why click numbers are so messy now

Ten years ago, a click was usually a click. Not perfect, but close enough. That is no longer true.

Today, links get touched by all sorts of systems before a human even sees the page. An AI tool may fetch the destination to create a summary. Slack, iMessage, WhatsApp, LinkedIn, and email apps may request the URL to build a preview card. Corporate security tools often scan links the second an email lands in an inbox. Some of them even follow redirects and load parts of the page.

If your shortener logs all of that as “clicks,” your reports are inflated from the very first line.

The three numbers you should track instead

If you want to know how to separate bot clicks from real clicks in url shortener analytics, the easiest way to think about it is this:

1. Raw link hits

This is every request that touches the short URL. Keep this number. It is useful for diagnostics. But do not present it as campaign performance.

2. Filtered human clicks

This is your best estimate of real people intentionally clicking. It excludes obvious bots, scanners, previews, and duplicate machine requests.

3. Confirmed landing page visits

This is what your analytics platform records once the destination page actually loads and tracking runs. This is often lower than filtered clicks, because some people bounce fast, block scripts, lose connection, or close the tab before the page fully loads.

If you only remember one thing, remember this: raw hits are not visits.

Why Google Analytics and your shortener never match perfectly

This is where many marketers get tripped up. A shortener logs activity at the moment of redirect. Google Analytics logs a session only after the landing page loads and the analytics tag fires.

So even a legitimate human click can fail to become a session. Maybe the page was slow. Maybe the browser blocked tracking. Maybe the user clicked and backed out. That is why some difference is normal.

If you want a deeper explanation of that mismatch, this guide is worth reading: Your Short Links Are Lying To You: How To Finally Match Clicks With Google Analytics (Without Losing Data).

How to separate bot clicks from real clicks in your shortener

You do not need a magic AI detector. You need a sensible filtering stack. Think of it like airport security. No single check catches everything, but several together do a very good job.

Check the request method

Many preview systems and scanners use HEAD requests before any real page load happens. A human browser click is more likely to use GET.

Rule of thumb: flag HEAD requests as non-human unless you have strong evidence otherwise.

Inspect the user agent

User agent strings still matter. Many bots, crawlers, and security tools identify themselves clearly. Look for patterns tied to:

- Email security scanners

- Preview generators

- Known crawlers and indexing bots

- Monitoring tools

This is not perfect because some bots spoof browsers, but it catches a lot of the noise.

Look at timing patterns

Machines are fast and repetitive. If the same link is hit multiple times within a second or two from the same IP range or identical fingerprint, that is often a scanner or preview process, not a person changing their mind five times.

Good filters often use a short deduplication window, such as:

- Group repeated hits within 1 to 5 seconds

- Treat the first suspicious hit as a preview or scan

- Only count a later full GET with browser-like signals as a possible human click

Check whether JavaScript ever runs

A redirect system alone cannot always prove a human visit. But if the destination page loads and a lightweight event fires, that is much closer to a real visit than a bare redirect request.

This is why pairing redirect-layer filtering with page-level analytics is so useful. One tells you who touched the link. The other tells you who actually arrived.

Use IP and ASN reputation carefully

Some traffic comes from cloud providers, security gateways, and known bot networks. That can be a strong clue. But be careful. Real people also browse from corporate networks, VPNs, and mobile carrier infrastructure.

Use IP reputation as one signal, not the whole decision.

Watch for no-cookie, no-render behavior

Many scanners request the page but do not behave like a real browser after that. They do not render properly, do not execute scripts fully, and do not continue to normal on-page events.

Again, one clue is weak. Several clues together are useful.

A practical filtering model that works for most marketers

If you want something you can put into practice now, use a simple three-bucket model.

Bucket A: Non-human or likely non-human

- Known bot or scanner user agents

- HEAD requests

- Burst requests in tiny time windows

- Known preview fetchers

- Requests from suspicious infrastructure with no browser behavior

Bucket B: Unverified clicks

- Looks browser-like at redirect

- No obvious bot pattern

- But no confirmed landing-page event yet

Bucket C: Confirmed human visits

- Redirect looked legitimate

- Landing page loaded

- Analytics or first-party event fired successfully

This gives you much cleaner reporting. It also keeps you honest with clients and stakeholders.

What this changes in real marketing reports

Once you split out these buckets, a lot of confusing campaign results suddenly become easier to explain.

Email campaigns

Email is one of the worst offenders because security systems often test links automatically. If your ESP says a campaign had massive click activity in the first minute after send, be suspicious. Humans are not that synchronized. Security filters are.

Paid campaigns

When judging ad performance, optimise against confirmed visits and downstream conversions, not raw short-link hits. Otherwise, you may reward placements that attract machine traffic.

Influencer campaigns

Influencer links can be hammered by social previews and curious apps that fetch metadata. If you only look at shortener clicks, one creator may seem to outperform another when in reality their audience simply uses a platform that generates more background requests.

Common mistakes that keep ruining attribution

Counting redirect hits as the source of truth

They are useful, but they are the noisiest metric in the stack.

Filtering too aggressively

If your rules are too strict, you throw away good traffic. This is especially risky with privacy-heavy browsers and corporate users.

Using only Google Analytics

That misses people who clicked but never fully loaded the page, plus users with blocked scripts. Analytics is valuable, but it is not the whole story.

Using only the shortener

That creates the opposite problem. You count lots of machine traffic as campaign success.

What a better setup looks like in 2026

A solid modern setup usually includes:

- A shortener or redirect tool that logs raw requests

- Bot and preview detection at the redirect layer

- A filtered human-click metric

- Page-level analytics for confirmed visits

- Clear reporting labels so nobody confuses these numbers

That last point matters more than people think. If your dashboard just says “clicks,” someone will assume they are all human. Label them plainly:

- Raw URL hits

- Filtered human clicks

- Confirmed visits

Simple advice if you are choosing a tool right now

Ask these questions before trusting any shortener analytics:

- Does it distinguish bots, previews, and scanners from human clicks?

- Can it show raw hits separately from filtered clicks?

- Does it support redirect-level logging plus destination-page confirmation?

- Can you review suspicious traffic instead of hiding it completely?

- Does it explain why counts differ from analytics platforms?

If the answer is no, the reporting will probably create more confusion than clarity.

At a Glance: Comparison

| Feature/Aspect | Details | Verdict |

|---|---|---|

| Basic shortener click count | Counts almost every request to the link, including previews, scanners, bots, and duplicate hits | Useful for raw diagnostics, bad for performance reporting |

| Filtered human clicks | Applies bot detection, request filtering, timing rules, and browser signals at the redirect layer | Best number for click attribution and channel comparison |

| Confirmed analytics visits | Only records traffic when the landing page loads and analytics tracking fires | Best for on-site engagement, but usually lower than click counts |

Conclusion

The recent frustration from marketers makes perfect sense. Basic shorteners are still counting every hit as a click, while modern browsers, AI previews, and spam filters are poking links in the background all day. At the same time, Google Analytics only counts a visit once the landing page fully loads, so the gap between clicks and sessions keeps getting wider. The fix is not to pick one number and hope for the best. It is to separate raw hits, likely non-human activity, and confirmed human visits right at the redirect layer. Do that, and your attribution model gets much cleaner. Your client reports become more honest. And your budget decisions for paid, email, and influencer campaigns get a lot smarter, because you are finally optimising for real people instead of machine noise.